Ansible Playbook for provisioning a Graylog central logging server

I first used Graylog a few years back while working for a tech startup in New York City. I was really impressed with it and it was probably the best log management software I've used at the time.

I've been meaning to set one up for Highview Apps for some time now, as we now have 5 servers for the different Django apps we've built and we will soon be adding another 3. Without centralized logging, we will have to log in to each of the servers to check the logs when there's an issue or just to get a quick look of what the apps are doing. You can see how this can be annoying and time consuming.

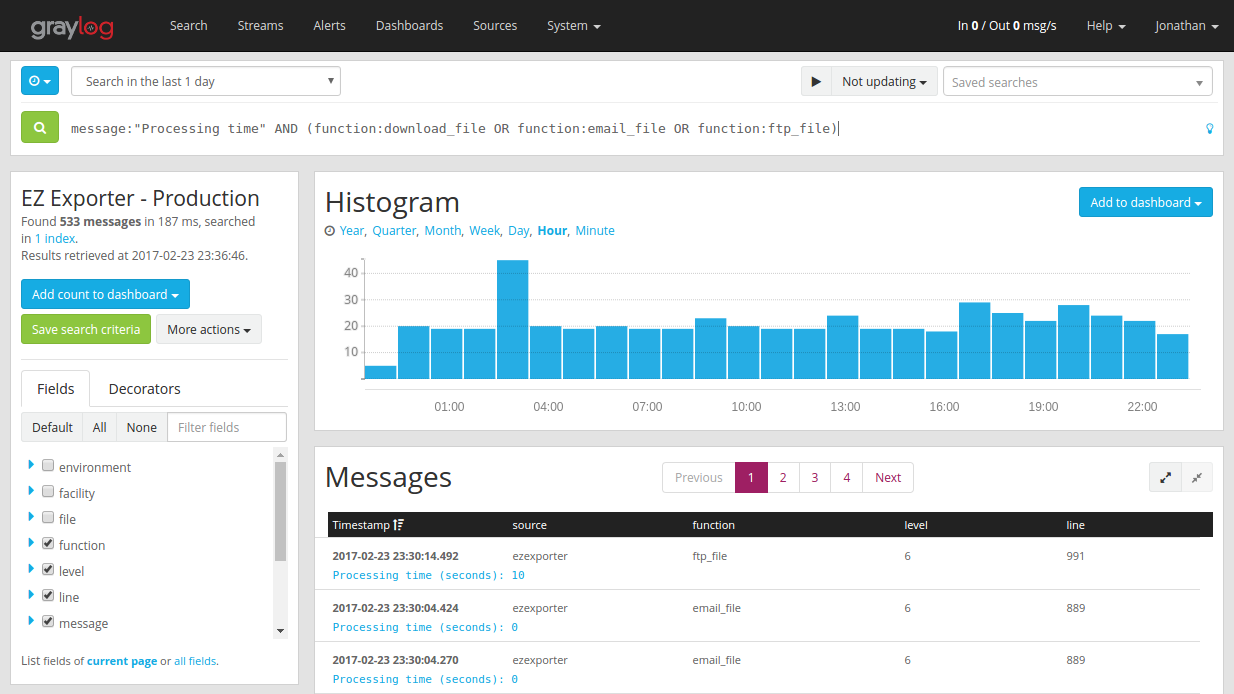

We've also built our apps to be able to scale horizontally. For example, our app EZ Exporter relies heavily on background workers using Celery to generate reports for our customers. We've gotten to a point now that we will need to soon add one more worker node to handle the additional load as our customer base grows. If a task fails, we will have to then check which node ran that task, then SSH to that server to check the logs.

Now if you have multiple worker nodes and processing many tasks and multiple tasks failed, those tasks would have ran on different nodes as the tasks are distributed across multiple worker processes in a round-robin fashion. You can see how this can be a headache to debug. Then multiply that by the number of applications you're managing.

We're getting close to finishing up our third Shopify app and we decided it's time to set up a central logging server. I naturally went with Graylog of course and very happy to see that the latest version (v2.2) has improved quite a bit from the last version I've used.

Both myself and my co-founder, who is also an engineer/developer, are big fans of automation and Ansible. We want to be able to rebuild the server from scratch within a few minutes with a one-line command in case things go wrong, so we decided to spend a little bit more time on this and create an Ansible playbook.

Graylog actually has an official Ansible playbook, but I found it a bit more complicated than I'd like as it's designed to support different Linux distros and versions, so I just created a separate one that is much simpler but only works with Ubuntu as all of our servers are running Ubuntu.

Another thing I wanted to include in the playbook is a Let's Encrypt SSL certificate, with a cron job to automatically renew the certificate. We use Let's Encrypt for everything in our environment that uses Nginx.

Just to summarize what our Graylog Ansible Playbook does:

- Install MongoDB

- Install and configure ElasticSearch v2.x

- Install and configure Graylog v2.2

- Install certbot (Let's Encrypt client)

- Install Nginx

- Configure Nginx to use an SSL cert and force https connections

- Configure and enable UFW (Uncomplicated Firewall)

A few things worth mentioning:

- The playbook is designed to run Graylog on a single-node master server.

- The RAM requirements for Graylog is 4GB. However, we're running our instance on a $5/month DigitalOcean VPS with 1 vCPU and 512MB of RAM. To get around the memory requirements, we simply enabled a 4GB swap file (this is already set by default in our playbook). When the Graylog service initially starts and when you first visit the web admin, it could take some time for things to load. But once past that stage, it actually runs quite well.

- By default, we have UFW configured to accept outside connections only on ports 22, 80, and 443 (you can change the settings in the ufw role's defaults/main.yml file). To send messages to the Graylog server, you'll need to add firewall rules to accept connections for whichever port/protocol you'll be using to receive log messages.

- Make sure to make adjustments to the index rotation and retention strategies from the web admin so you don't run out of space unexpectedly at some point.

You can download the playbook here: https://github.com/jcalazan/ansible-graylog